MVP design decisions: What to build, what to skip, what to test

The strategic framework that separates successful startups from the 70% that fail within twelve months

Here’s something that keeps us up at night: watching brilliant startup founders make design decisions that doom their MVP before a single line of code is written. Over the past two years, we’ve worked with startups across different markets, and we’ve seen the same pattern repeatedly.

The statistics are sobering. According to CB Insights analysis, seven out of ten digital products fail within twelve months, with 42% of startups failing because they build products without market need. Yet companies implementing structured MVP development strategies reduce failure rates by 60%, launch 40% faster, and decrease development costs by up to 50%.

The difference between success and failure often hinges on design decisions made at the beginning of the MVP journey. Poor MVP design creates compounding problems that become exponentially more expensive to fix.

The real cost of design decisions

Imagine you’re six months into development, and user testing reveals that your core user flow confuses everyone. What started as a “small usability issue” becomes a six-figure problem.

According to Design Rush research, fixing a bug caught during design costs roughly 1/6th of the price if caught during coding, and 1/100th of the price if discovered in production.

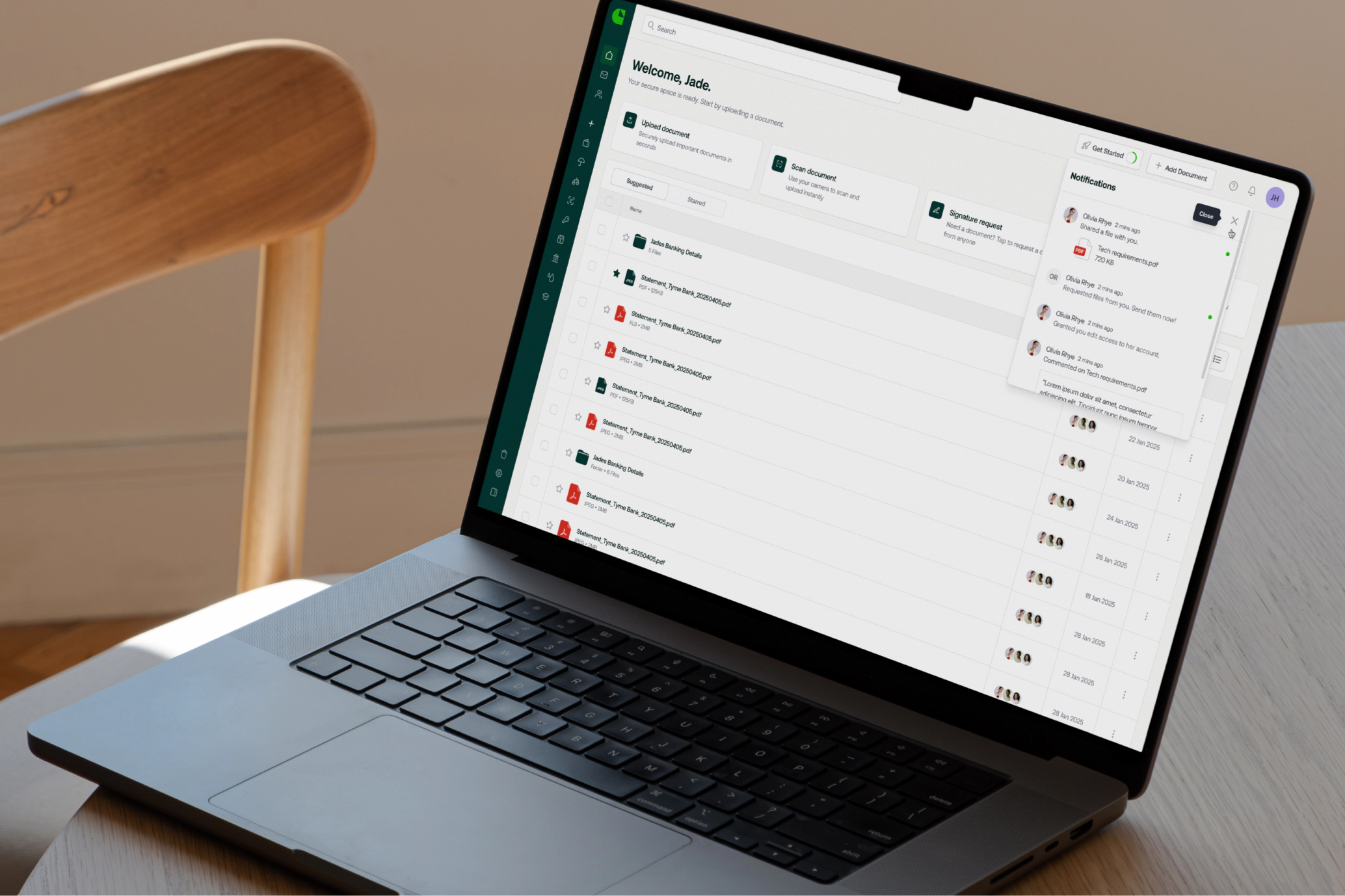

One of our fintech clients learned this firsthand. They initially designed a comprehensive dashboard with multiple features, believing more functionality would impress users. User testing revealed the opposite: 74% of potential customers will switch to a competitor if the onboarding process is too complicated. We restructured their MVP around core features, and their completion rate improved significantly.

Our practical tip: Before adding any feature to your MVP scope, ask yourself: “Would users still get core value if we removed this entirely?” If yes, save it for Version 2.

Smart prioritisation frameworks

Feature creep affects nearly 52% of projects, leading to budget overruns and missed deadlines. The most successful startups combine multiple prioritisation frameworks:

MoSCoW Method (Must have, Should have, Could have, Won’t have) works brilliantly for smaller MVPs with tight constraints. It forces binary decisions that eliminate ambiguity.

RICE Scoring ((Reach × Impact × Confidence) ÷ Effort) provides quantitative ranking across larger feature lists. This works best for teams that can estimate impact and reach reliably.

Kano Model categorises features as basic expectations, performance features, or delighters. This prevents teams from wasting resources on performance improvements when basic expectations remain unmet.

The insight that changed our approach: the most successful MVPs validate using both quantitative and qualitative methods simultaneously.

What to build: The brutal prioritisation that wins

The most successful MVPs follow a simple formula: solve one problem exceptionally well, rather than solving multiple problems adequately.

Apps with good onboarding experiences have 50% higher retention rates, while the average onboarding completion rate across SaaS companies is only 19.2%. Complex authentication, multi-step setup, or feature-heavy tutorials amplify bounce rates exponentially.

Standard MVP feature set by complexity level:

- Simple MVPs: 3–5 core features addressing one primary pain point

- Medium complexity: 6–10 features, with clear primary and secondary user journeys

- Complex MVPs: 10–15 features, but always structured around a single value proposition

Our approach: Every feature must pass the “core value test.” If removing a feature prevents users from experiencing your primary value proposition, it stays. Everything else waits for Version 2.

What to skip: Strategic omissions that accelerate validation

The most expensive MVP features are often the ones that seem essential but don’t drive user adoption. We’ve identified five categories that consistently consume resources without driving early validation:

Advanced analytics and reporting: Most startups prematurely build complex dashboards before validating that users care about the data being reported.

Comprehensive user management systems: Role-based access controls and permission hierarchies can begin manually, saving months of development time.

Premium tiers and subscription logic: Monetisation mechanisms should wait until after product-market fit validation.

Multi-language support: Unless geographic expansion is immediate, building localisation infrastructure postpones validation.

Mobile app (when web MVP suffices): Web MVPs can launch 25–40% faster than mobile apps since they skip app store approval processes.

The key insight: manual fulfilment workflows often outperform automation in MVP stages. Concierge MVPs reveal genuine user workflows without over-engineering.

What to test: Validation methods that predict success

Startups that include user research in MVP planning significantly outperform those that skip it, but most founders test the wrong things at the wrong time.

Landing page validation costs near-zero and validates core value propositions before building anything. We’ve seen teams generate hundreds of qualified leads from simple landing pages.

Usability testing with 5–8 users reveals friction points that metrics alone cannot expose. One session where a user says “I don’t understand what this does” is worth more than 100 analytics events showing bounce rates.

Concierge MVPs scale user research by engaging early customers in direct, manual interactions. Dropbox’s famous video MVP generated 75,000+ email signups before building the final product.

A/B testing quantifies which design variations drive desired behaviours. Landing page tests can show conversion differences of 35–200% between variations.

Why human-centred design outperforms AI automation

While AI-powered design tools accelerate prototyping, they come with trade-offs that become critical during MVP validation phases.

Human-centred design-led teams uncover user needs competitors overlook, creating defensible differentiation. AI excels at generating variations on known patterns, but MVPs require discovering unknown user behaviours and mental models.

Many successful startups adopt a hybrid approach: Use AI tools to accelerate repetitive tasks whilst maintaining human-led decision-making around UX strategy. The result is 30–40% faster development cycles whilst preserving creative direction.

For MVPs specifically, human designers provide greater ROI than pure automation because MVPs require rapid learning from user feedback, rather than template application.

Case studies: Brilliant MVP decisions in practice

Uber’s MVP wasn’t a comprehensive transportation platform. It solved one specific problem: calling a luxury car service via smartphone. Key decisions included focusing on one service type, simple payment flow, and rapid iteration based on user feedback.

Airbnb’s MVP validated market fit with minimal technical build. Founders rented air mattresses during a sold-out conference with a basic website. The deliberately minimal design forced validation of the core hypothesis before building infrastructure.

Slack’s MVP focused on solving specific communication problems: scattered conversations and slow information discovery. Rather than building every possible chat feature, the team identified one acute problem and solved it exceptionally well.

Your MVP decision framework

Based on our experience with international clients, here’s our recommended framework:

Before design begins: Identify your single core hypothesis, define minimum success criteria, and set non-negotiable constraints.

During feature prioritisation: Apply the core value test, estimate learning vs cost ratio, and plan your next validation cycle.

During design and development: Maintain scope discipline, test assumptions early, and document learnings.

The most successful MVPs we’ve partnered with weren’t polished products. They were focused experiments designed to answer specific questions about user behaviour, market demand, and product-market fit.

Conclusion

At Refresh, we believe MVPs are learning tools, rather than finished products. The evidence consistently points to the same conclusion: design decisions at the MVP stage compound throughout the product lifecycle.

The startups that win won’t be those with the most features. They’ll be the ones that made smarter design decisions early, validated assumptions faster, and learned from users before scaling.

Ready to make strategic MVP decisions that validate your startup’s potential?